Results of VPRO – VicarVision hackathon

What can you do if you can read the emotion of a face in real-time?

That’s the question 30 coders, designers and story makers tried to answer during a 2-day hackathon organized by VPRO and VicarVision.

We had an amazing time and love to share the results.

Story amplifier (winner)

Tell a story while FaceReader automatically enriches it with sound and image effects.

The story teller’s facial expressions was measured while a matching music fragment was added to the story in real-time (e.g. sad face->classical music).

Furthermore a Speech to Text to Flick engine was developed to support the story with nice images. The image effects were controlled by facial expressions.

FacePainter

Create a hands-free drawing with your face as a joystick and use your expression as a color/pensil-tool picker. The colors you pick are hidden during drawing making it a magical surprise after you are done.

The project used the FaceReader gaze direction, the face position and a lot of visual magic.

Expression hero

Try to explode images with the emotion that belongs to the image.

E.g. a shark comes at you and you have to express fear before it is too close.

A very useful FaceReader to Unity plugin was made.

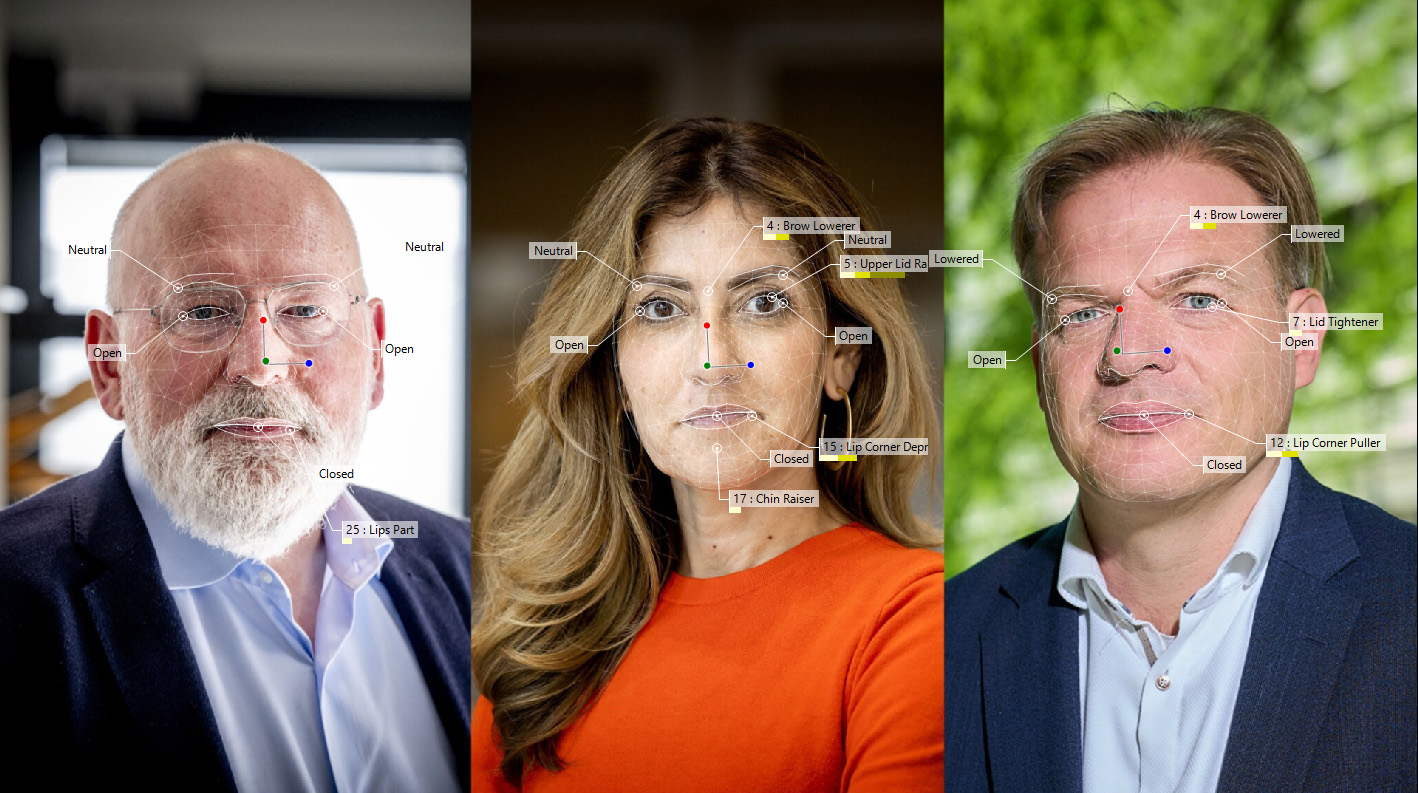

SmartMike

A microphone for interviewers that compares facial expression with the emotional content of the spoken language. The mike displays ‘bullshit’ if these two sources are not in harmony.

e.g. (sad face) + “we are happy with the negotiations” -> Bullshit!

It connected a mobile phone to the FaceReader, and a local webserver that streamed the FaceReader pie chart to the phone.

MoodBook

The emotion you express filters the news articles you read. E.g. an expression of happiness highlights positive news articles and blurs other articles.

An automatic text to emotion API was used and javascript code that visually changed the articles in a FaceBook timeline was developed.

Furthermore the emotional content of 140 articles was annotated and compared. A statistical analysis was applied and concludes that machines are getting intelligent, but humans still outperform machines.

Let’s see if this statement still holds in 5-10 years from now!

More info:

Presentation results (video)

Article (dutch) of the process:

http://www.vpro.nl/medialab/nieuws/Hackathon-Emoties-en-algoritmes.html