FaceReader 8 release! – More flexibility and extra possibilities

We are happy to announce the release of FaceReader 8, perhaps the most ambitious and elaborate release so far. It is now possible to measure expressions of children under the age of 2 (Baby FaceReader), to record audio and make infrared recordings, to measure consumption behavior, to analyze left and right action units separately, and to create your own expressions. For a more elaborative overview, see our partner’s page what’s new. In this blog post, we want to further go into the ‘create your own expression’ module.

Create your own expression

Facial expressions such as happiness or sadness are composed of several muscle movements of the face. For example, when someone smiles the corners of the mouth are pulled up and the cheeks are raised. Psychologist Paul Ekman has categorized these specific movements in the Facial Action Coding System (FACS). These Action Units form a more objective and detailed way to analyze facial activity than basic emotional expressions. Scientists generally agree that there are a few basic emotions (happiness, sadness, anger, fear, disgust) that are frequently and reliably discerned. However, there are many more emotions and behaviors that someone can express (for example, shame, cognitive load, or pain). These more complex emotions could have differential patterns that depend on culture or context and thus are not standardly included in FaceReader. Nevertheless, they are very interesting to investigate; therefore, in the previous 7.1 release we included experimental affective attitudes of interest, boredom, and confusion. To facilitate the study of broader types of emotions further, you can now create your own custom expressions yourself.

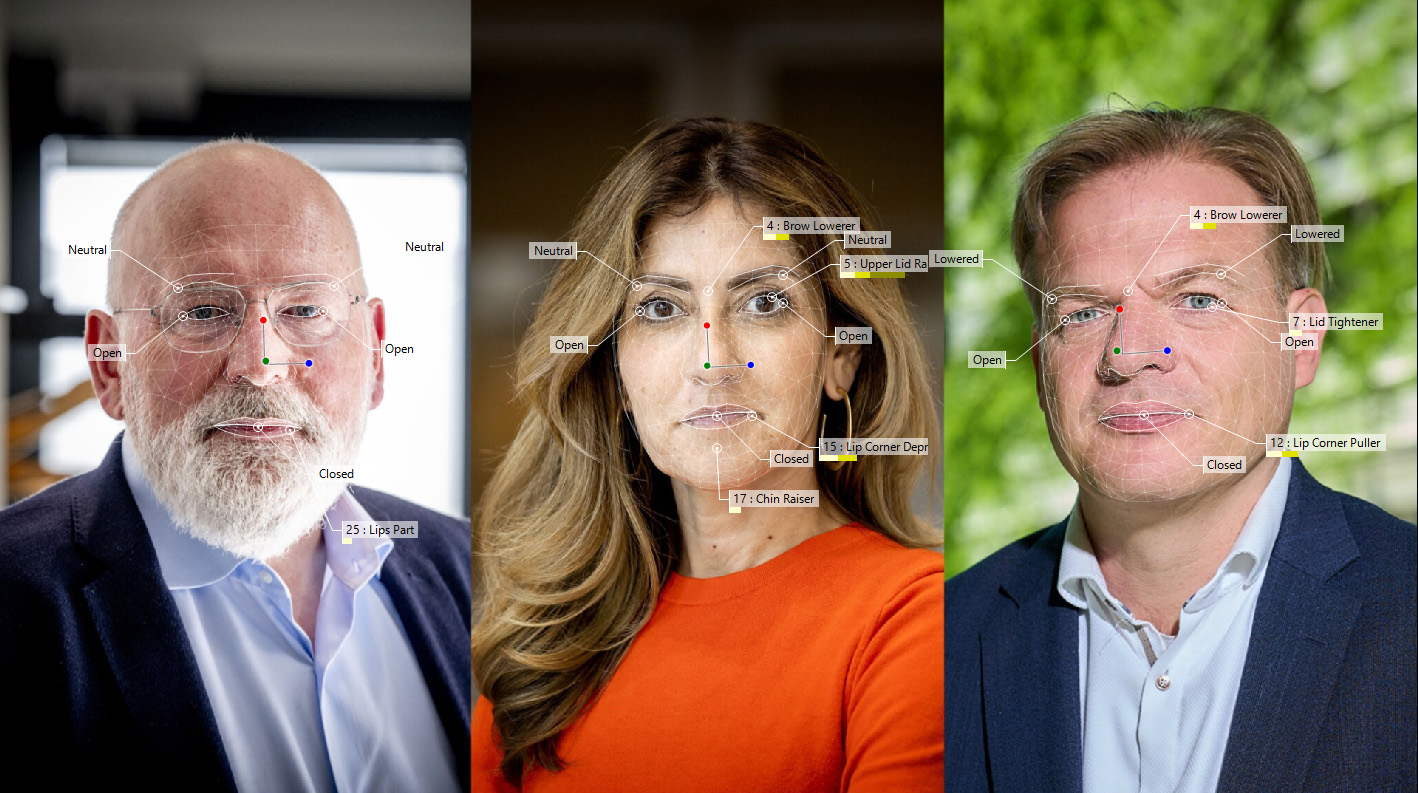

This will be very useful for research and commercial applications. You can use Action Units and other variables from FaceReader to create your own expression algorithm (see image).

Useful applications

This will make it easier to test specific hypotheses regarding certain expressions. For example, you can easily differentiate real or false smiles. The affective attitudes are also available in the custom expressions browser, so you can look at an example of how to create a more complex custom expression. If you found a custom expression to be especially relevant in a certain setting, such as interviews or usability research, you can use the new module to get live emotion results. If you want to try this new feature, contact Noldus or buy/try FaceReader here. You can also contact us if you need some help with the creation of your own expressions.